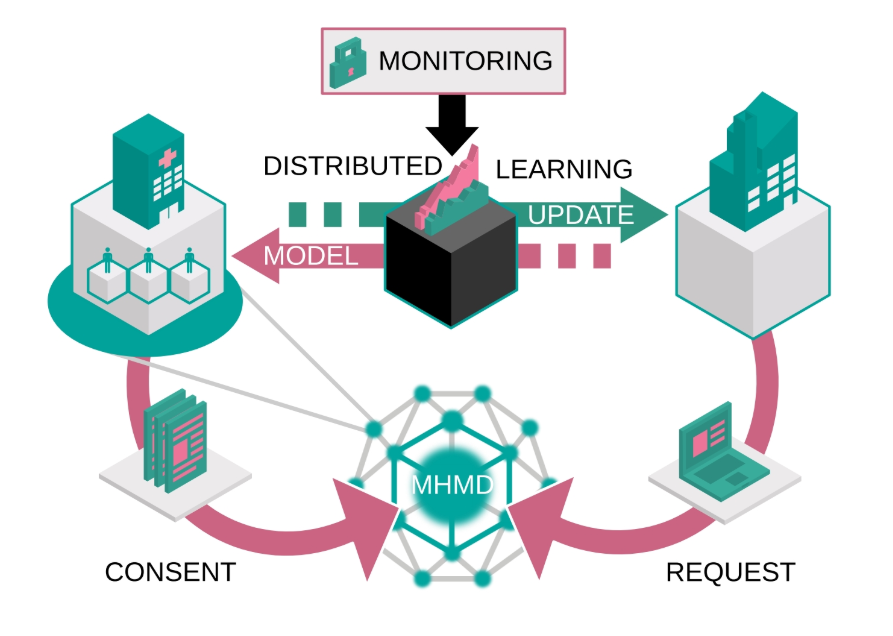

Similarly to secure computation, distributed learning is used to cope with issues posed by data sharing processes such as privacy, consent, storage, transfer and curation. Machine learning traditionally performs all computation tasks in a centralized location. Distributed learning, on the other hand, relies on multiple data providers who each hold a small part of the data, and are willing to collaborate to perform machine learning with joint resources. In this case, the data never leaves the premises of its provider, giving them full control over every single access.

A novel approach to distributed learning with a black box was developed during the project. The idea is that a third party may supply an executable deep learning model to an orchestrator as a black box that computes both a loss function and its gradient. The model is sent to data providers for local evaluation. Special care is taken to execute third-party software in an isolated environment and to monitor its output. Before communicating intermediate results for training back to the third party, results of several instances of isolated black-boxes are averaged by the orchestrator, making it extremely hard for the third party to gain any knowledge about individual data samples.